Adapting Voice Assistance for Business: The Walk

In our previous post, we discussed how integrating Oracle JD Edwards with Amazon’s Alexa could improve business efficiency with the help of voice input. In this blog post, I’ll explain how our team initially configured Oracle JD Edwards business services with Alexa’s “Skills.”

User: Alexa, open Address Book Manager.

Alexa: Address Book Manager. What address book ID would you like to look up?

User: Look up address book id: 20.

Alexa: Address book id: 20 belongs to Universal Supplies. What’s another address book ID would you like to look up?

User: Exit.

Voice Input

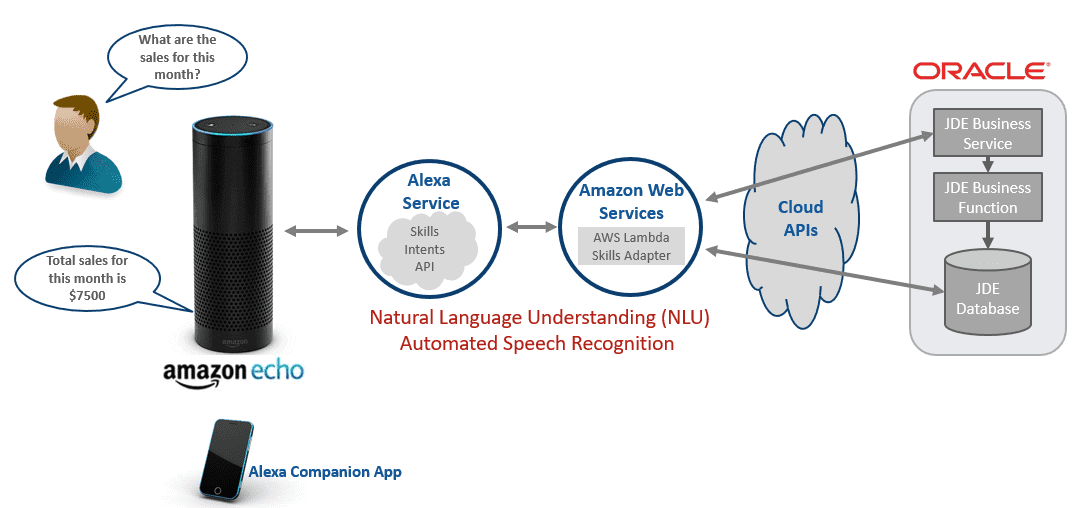

The journey to retrieve data from Oracle JD Edwards (JDE) EnterpriseOne (E1) involved two different configurations. I’ll briefly explore our approaches and identify the challenges my team faced while developing skills for Alexa.

Most devices receive input from the user in the form of a controller (such as a mouse) or via touch. With the rise of Apple’s Siri, Microsoft’s Cortana and Amazon’s Alexa, voice input is becoming commonplace, but voice output is just getting its start. Not long ago, our GPS devices could reply to us with real-time directions, effectively using voice as a representation of our positions on a map. According to the HMI Handbook, sound now has a place in operations by alerting or notifying a user to changes. Our team hopes to close the gap between reality and practicality, especially in business environments, by extending our development services for JDE E1 through Alexa.

Developing for Amazon Alexa

Amazon has provided an excellent guide for those looking to develop “Skills” for Alexa. We configured our Alexa Skills via the developer portal, then used Amazon Web Services (AWS) Lambda to execute our code. Since our developers didn’t have to setup hardware, they could concentrate on what they do best.

Developing the skill and the function for AWS Lambda didn’t require us to create a development environment or manage servers. AWS Lambda handles the code execution and makes it easier for us to focus on developing the skills. However, to enable the communication between Alexa and JDE EnterpriseOne, our local development environment did require some setup and configuration.

Configuring JDE

There were many options to access the data from JD Edwards:

- Establish a connection to the database

- Use Web Services to produce and consume data, via business services

- Use AIS Server endpoints to access Enterprise One data

We could successfully establish and retrieve data by connecting to the database or by developing business services, as shown here:

Speed, Security, and Systems

We were first overjoyed when we could finally retrieve data from JDE. We tried once more for a different client, and Alexa repeated the data back to us. The next step was to demonstrate the skill during our stand-up meeting. In our first scenario, we presented an option: users could retrieve total sales or sales by client. When users opted for total sales, Alexa would take a few seconds longer to report the results. In some cases, Alexa would remain quiet indefinitely. This concerned us, but we weren’t yet aware that these long pauses were due to latency. As we continued to make incremental changes, it became increasingly common for Alexa to not provide the results in a timely manner. As time passed, latency wasn’t our only issue.

Previously, we could establish two types of connections to JDE to retrieve data. The connection to the database required firewall changes to allow inbound connections. About a few weeks into development, our database server was compromised, which required us to rethink our current configuration. Although the only affected data was demo data and no serious damage was done to our production systems, the breach prompted us to address both the latency and security issues. We restricted public access to the database to address the security compromise by limiting connections from a specific source.

The latency issue can be broken down in two parts:

- execution time

- the speed of the data retrieval

Since we had to format the data prior to output, execution could take longer than 3 seconds, so we increased the Alexa’s time out period to wait for responses from JDE. While this did increase latency, Alexa eventually provided us with an output for our requests even if we had to wait longer. To address data retrieval times, we reconfigured our network to access the business services publicly, thereby reduce the complex routing setup.

These two challenges presented themselves once we had all the different pieces working. JDE configuration and setup presented its own challenges when we were developing business services in JDeveloper. This is fundamental, as it required us to understand the development methodology for JDE EnterpriseOne and to publish business services successfully. With many different pieces of technologies working together, custom variations and implementation make it harder to reproduce and to troubleshoot. Adhering to standard practices and establishing configurable templates allowed us to develop further on our initial query.

We are excited to bring JDE EnterpriseOne and Alexa together. We hope to provide meaningful solutions to business problems by configuring Alexa to receive voice input, access data from EnterpriseOne while using business services, and provide meaningful output.

If you have any comments, or have some ideas to you’d like to explore, please feel free to leave a comment below.

There’s more to explore at Smartbridge.com!

Sign up to be notified when we publish articles, news, videos and more!

Other ways to

follow us: