The Path Towards a Modern Data Warehouse with Snowflake

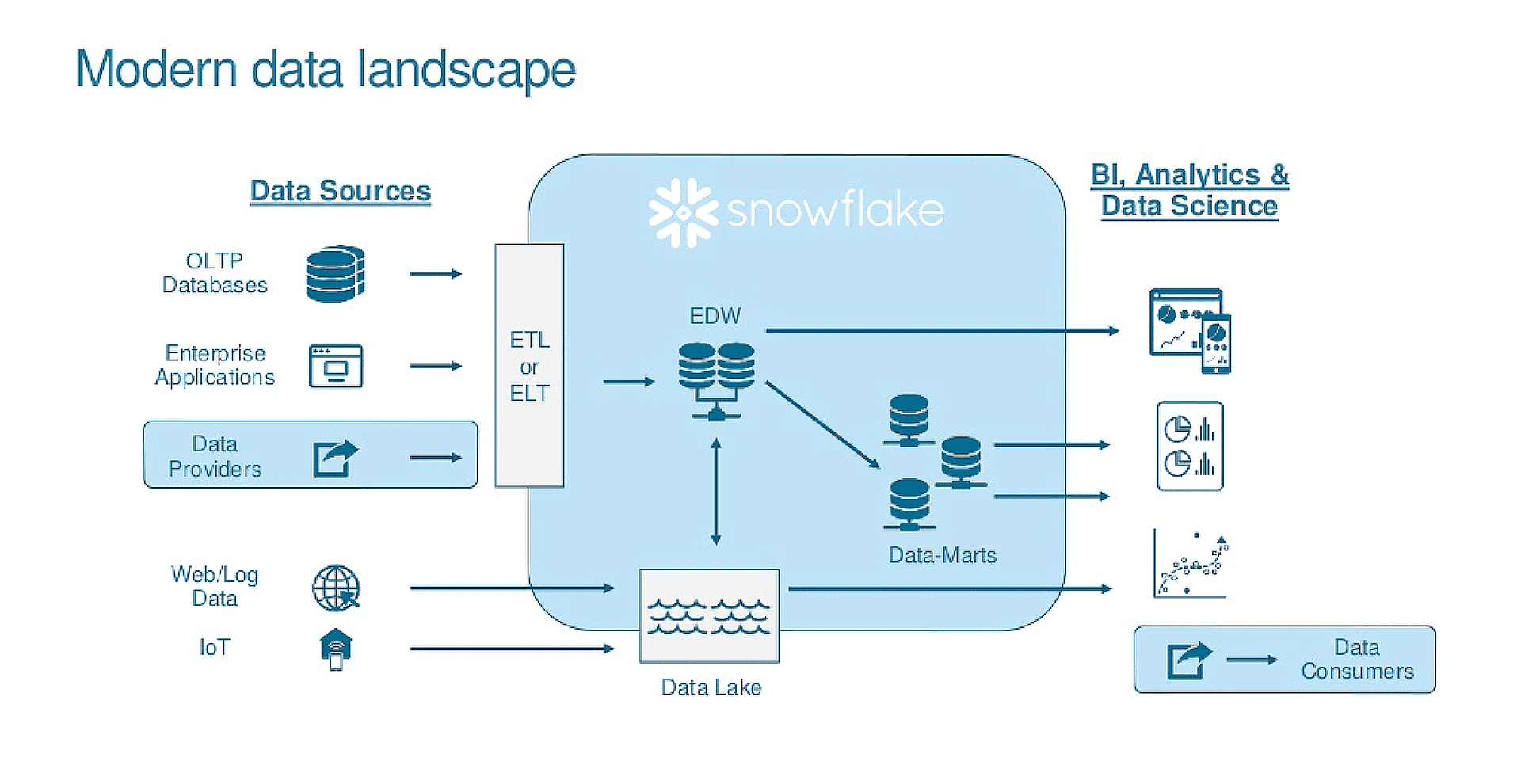

As business processes are adapting, so should your data warehouse. We’ll discuss what moving to a cloud data warehouse entails and how our partner, Snowflake, is providing a simple, efficient, and flexible solution to handling the high volume, variety, and velocity of data companies need to tend to.

Picture courtesy: Snowflake Computing Inc.

The Rising Need of a Modern Data Warehouse

Business Intelligence processes play a key role in all organizations that help various layers from executives to managers to make decisions and analyze their business. The analysis helps the business make better decisions and increase the productiveness of their organizations. Our data management services team understands that certain factors like a few listed below could impact the effectiveness of the analytics.

Snowflake’s unique design and capabilities provide solution to several problems like the ones we discussed above. Let’s look at some of the key features below:

Picture courtesy: Snowflake Computing Inc.

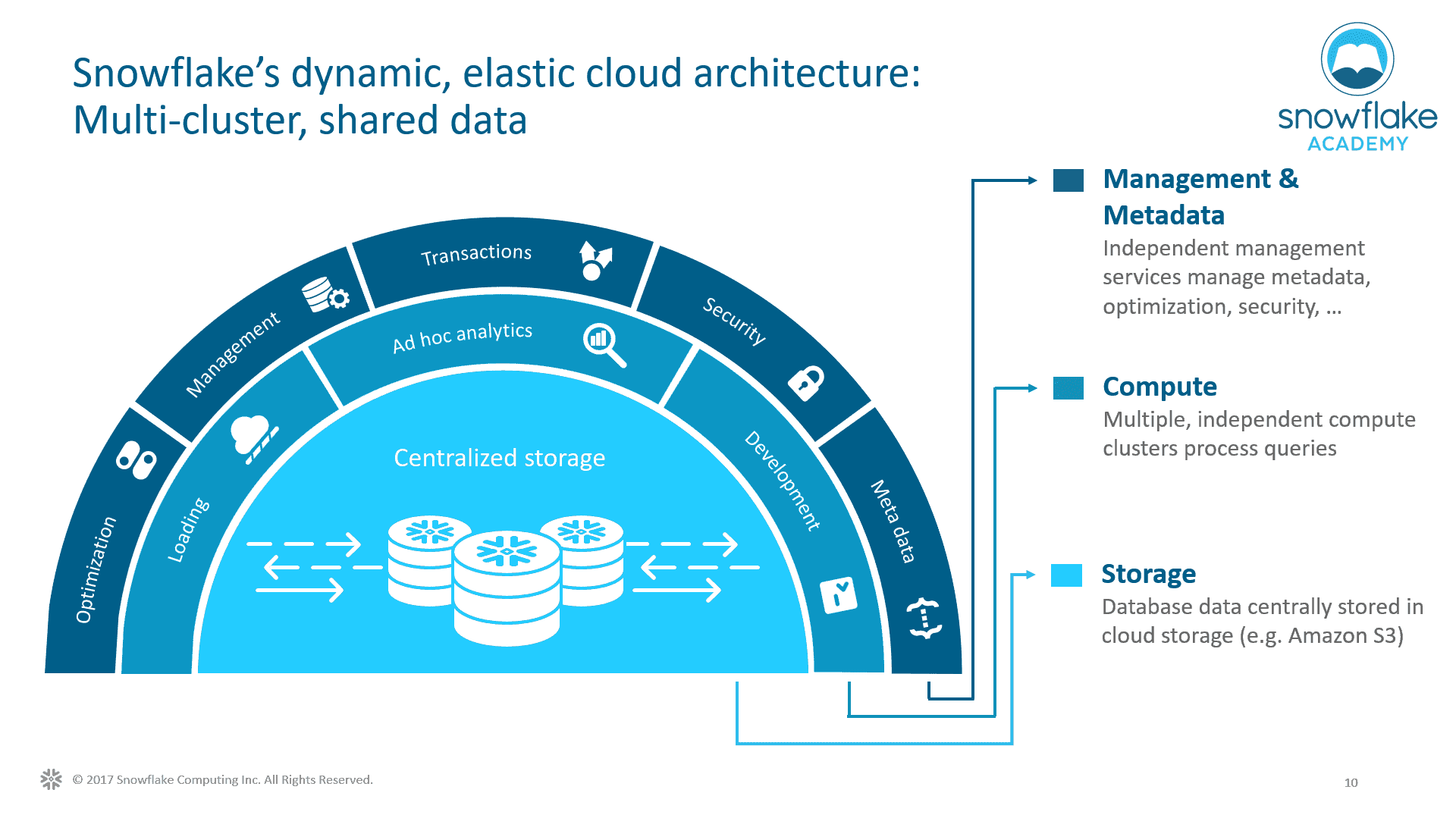

Storage, Compute, and Services

The traditional on premise data warehouses are becoming expensive in terms of hardware and administration. It often needs a dedicated team of database administrators and design experts to monitor and maintain the system. As we all know, “data assets” are ever growing and companies have to estimate the costs upfront in a traditional on-premise data warehouse. This is where CDW (Cloud Data Warehouse) provides flexibility that eliminates the upfront costs by providing with the “pay as you go” pricing option. It also opens up the advantage of exploring options with broader data sets. Statistics show that storage costs have considerably declined over the past few years with data storage getting cheaper proving that integrating data in the cloud makes things easier. Snowflake’s unique architecture separates the service, compute and storage components.

Infinite Scalability and Elasticity

Analytics is critical to business and users often want to get results within a reasonable time frame. The limitation with the conventional data warehouse is the slowness of the system which results with workloads to run within the limited resource availability. The cloud data warehouse has the perfect solution of elastic scaling where the resources can be scaled up and down at any given time based on number of workloads.

Have you ever wondered how this works?

Snowflake uses Virtual Warehouses (VW) for the query processing. Each VW is an independent cluster, and can be scaled up and down as the need changes. The design allows multiple clusters to access the same database tables allowing data sharing without any data movement. Costs are incurred only when the VW is being used/up and running. Multi cluster warehouses can be created by just specifying name and size while Snowflake handles all the provisioning and configuration.

Say Goodbye to Work Load Contentions

It is a common scenario where multiple processes like data loads and user report queries access the system at the same time. This leads to a problem where the user might see partial/inaccurate data or end up modifying the report execution times which can impact the business team both in terms of time and value. Snowflake’s unique design distributes the loads on virtual warehouses across multiple compute clusters. Let’s look at how contentions are avoided:

For example, we can assign ETL and business user runs to run on their individual cluster – Data Cluster and Business Cluster. By doing this we avoid any bottlenecks that happen on loads and the user runs, as they run independently of each other. Further, it is also possible to clone a table, schema, or a database across different environments to get updated/refreshed results continuously.

Handle Various Data Forms

Data modeling is very critical because it represents all the entities of the business.

In the modern cloud world, data modeling is agile and can change overtime. To support this, Snowflake handles structured and semi-structured (JSON, XML, etc.) data very well. In addition to supporting the common SQL data types (Numeric, String & Binary, Data & Time), Snowflake has VARIANT, OBJECT, and ARRAY data types to support semi structured data. All the common database DDL operations can be performed using the SQL: ANSI.

Security

The most common concern in cloud systems is the potential risk to the data security, security of users, and access to sensitive information. Snowflake provides the network, user, and data security similar to other cloud providers. In addition, there are some great features for data recovery and secure views offered by Snowflake.

Better Together

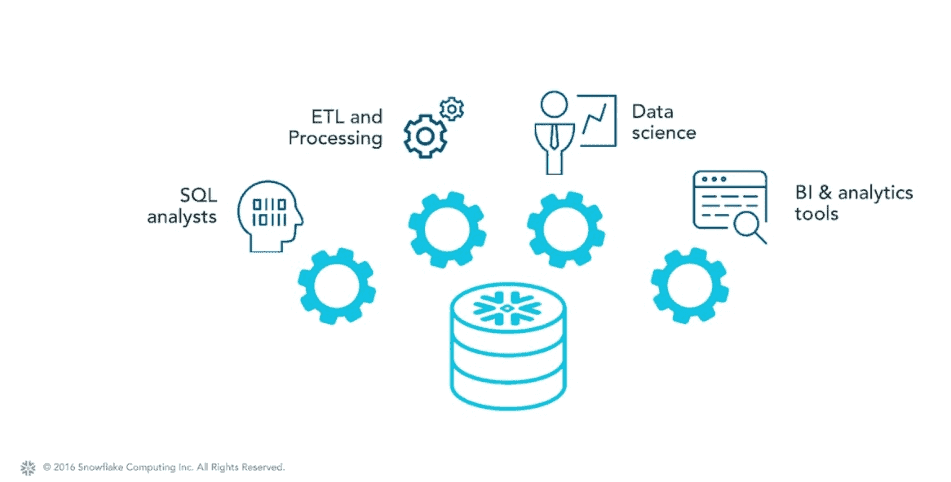

Even though Snowflake on its own is a powerful tool, it can work even more efficiently when paired with a few companions. Here are a couple of popular solutions to assist with data ingestion, orchestration, and transformation.

Prefect

Prefect is an open-source workflow orchestration tool that enables businesses to automate data pipelines and workflows. Together with Snowflake, Prefect can provide a powerful solution for businesses looking to streamline their data processing and analysis.

Using Prefect with Snowflake involves setting up workflows that automate the movement and processing of data between Snowflake and other data sources. With Prefect’s built-in Snowflake tasks, businesses can easily extract data from source systems, transform it, and load it into Snowflake. This process can be fully automated and scheduled, allowing businesses to save time and resources while ensuring accurate and timely data processing.

The benefits of using Prefect with Snowflake include improved data accuracy, reduced data processing time, and increased efficiency in data management. By automating data workflows and minimizing manual intervention, businesses can also reduce the risk of errors and ensure that data is processed consistently and reliably.

dbt

dbt, or data build tool, is an open-source tool that allows you to transform your raw data into business-ready insights. When combined with snowflake, it is a very powerful tool to perform all of your data modeling and transformation required.

It uses a combination of SQL and a custom language, Jinja, which enables tons of macros that can simplify your SQL. This makes it easy for data engineers to adopt and design the various models in the environment. Once the models are all completed, jobs can be configured to schedule how frequently your models will be refreshed, and how they’ll be refreshed (i.e. as a full refresh, incremental, etc…).

dbt also has several features in it that help improve data governance so that your end users can have more trust in their data. For the data engineers, dbt’s version-control helps with collaboration and enables users to share work with their team. Moreover, dbt provides a robust testing framework that ensures the quality of your data and helps you catch errors before they impact your business (i.e. primary keys are unique, required fields are not null, historical data is accurate). Lastly, with dbt docs, all production code and all data lineage is made available to users, so end users have a working data dictionary.

In conclusion, if you’re looking for a powerful data transformation solution, look no further than dbt with Snowflake. By combining the two, you can create a scalable and reliable data pipeline that can help you make better business decisions.

In Conclusion

The traditional on-premise data warehouse model has become outdated due to performance issues, the rise of new data sources, and the growing trend of IoT. Snowflake’s unique design and capabilities provide a flexible and cost-effective solution to these problems with a flexible pay-as-you-go pricing model, independent compute resources, elastic scaling, support for structured and semi-structured data, and robust security measures.

Combine it with another tool or two like dbt and Prefect and you’re on your way to a modernized data program for your organization.

Keep Reading: Agile Analytics – Azure SQL Data Warehouse

There’s more to explore at Smartbridge.com!

Sign up to be notified when we publish articles, news, videos and more!

Other ways to

follow us: