Azure AI Foundry: Building Enterprise AI Applications

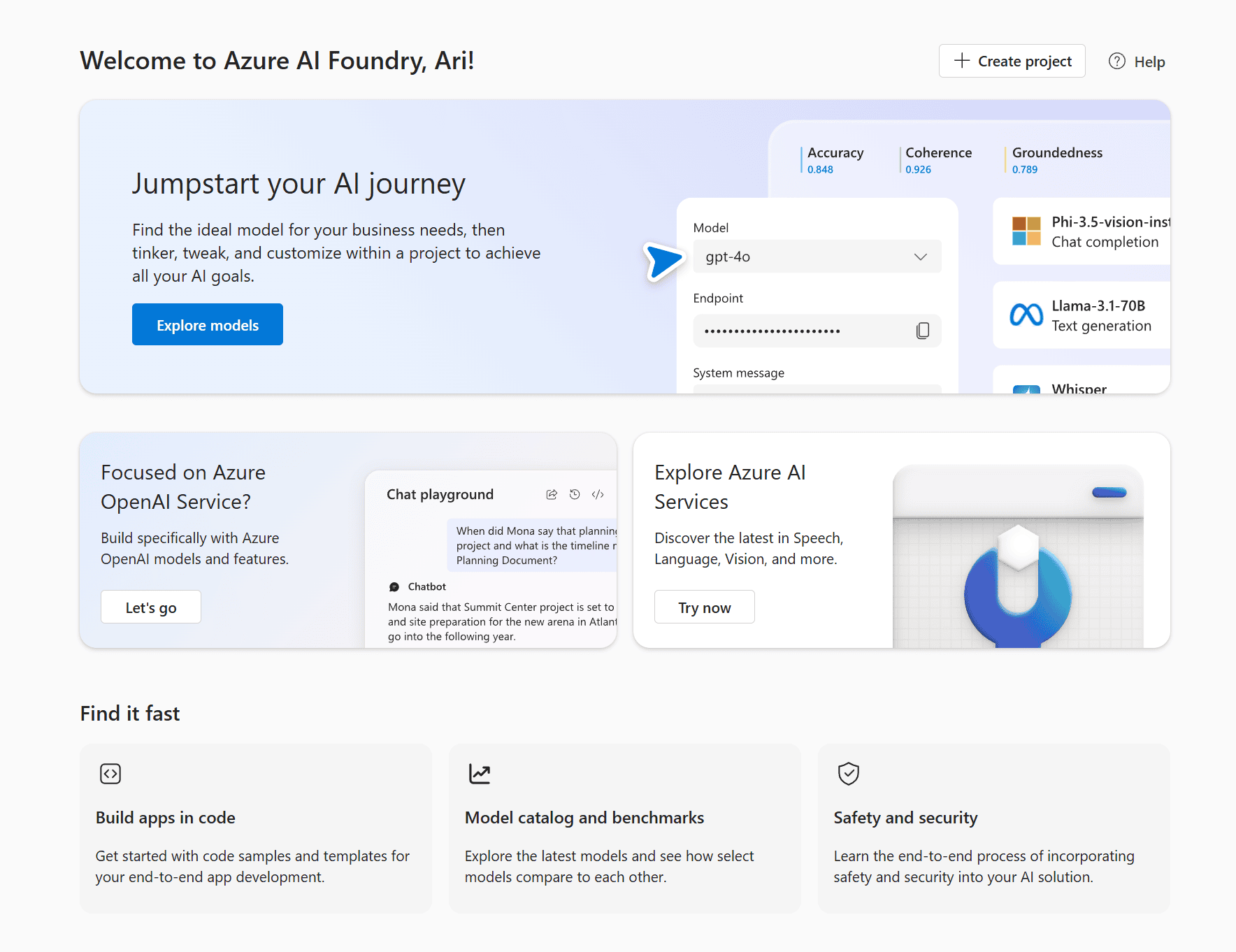

This guide provides a comprehensive overview of Microsoft’s Azure AI Foundry platform. A unified environment for building, deploying, and managing enterprise AI applications

As Smartbridge watched the enterprise AI environment shift into 2026, we noticed something important. Organizations moved past pilot programs toward production-ready AI systems. Microsoft Foundry was elevated in late 2025 as an ‘Agent Factory’ featuring a Model Garden with GPT-5 and Claude 4.5.

Microsoft’s unified platform approach addresses real challenges enterprise teams face daily. Disparate AI tools create integration headaches. Security and governance gaps slow deployment. Teams need a production-grade environment that scales.

Azure AI Foundry delivers that environment. You get access to thousands of models, unified project management, and tools built for production deployment. The platform handles the infrastructure complexity. Your teams focus on building AI applications that solve business problems.

This guide walks through the platform’s architecture, capabilities, and implementation approach. You’ll understand how Foundry Models, the Agent Service, and enterprise controls work together. You’ll see practical integration patterns with Microsoft 365 and Azure services.

What Azure AI Foundry Brings to Enterprise AI Development

Microsoft Foundry represents a unified platform strategy for AI operations. The platform consolidates model access, development tools, and enterprise controls into a single environment.

Think about the typical enterprise AI development process. Teams access models from multiple sources. They build custom code for monitoring and governance. Security reviews happen separately from development workflows. Integration with existing systems requires custom connectors.

Azure AI Foundry changes this pattern. The platform provides a central workspace where models, agents, and applications live together. Development teams access everything through a consistent interface. Security controls apply across all projects automatically. Monitoring and observability work out of the box.

Core Platform Components

The platform architecture includes three primary layers. The foundation layer provides model access through the catalog. The development layer offers tools for customization and agent building. The operations layer delivers governance and monitoring capabilities.

Microsoft Foundry provides access to foundational models and tools for customization like prompt engineering and fine-tuning. This access spans proprietary models from OpenAI, Anthropic, and Meta. Open-source options include models from Hugging Face and other repositories.

Integration With Microsoft Ecosystem

Azure AI Foundry connects directly to services across the Microsoft ecosystem. Azure AI Studio provides the development interface where teams build and test applications.

Azure OpenAI Service integration gives teams immediate access to GPT models with enterprise security. Applications can call these models through standardized APIs. Organizations maintain control over data residency and usage policies.

Microsoft 365 integrations enable AI-powered workflows across familiar business applications. Teams can build custom copilots that work inside Word, Excel, and Teams. These copilots access enterprise data while respecting existing security boundaries.

Azure Logic Apps and Power Automate connections extend automation capabilities. AI agents can trigger workflows, update records, and coordinate complex processes across systems. The platform handles authentication and error handling automatically.

Accessing AI Models Through Foundry Models Catalog

Now that you understand the platform foundation, the model catalog becomes your primary resource for AI capabilities. Foundry Models provides access to over 11,000 pre-trained models spanning multiple modalities and use cases.

Model Selection Strategy

Choosing the right model starts with understanding your use case requirements. Language models handle text generation, summarization, and classification tasks. Multimodal models process combinations of text, images, and structured data. Specialized models address domain-specific needs like medical terminology or financial analysis.

Recent January 2026 updates include new OpenAI models, such as GPT-image-1 for image generation. These additions expand visual content creation capabilities within the platform.

| Model Category | Primary Use Cases | Deployment Options |

|---|---|---|

| Large Language Models | Text generation, summarization, Q&A, content creation | Azure OpenAI, serverless API, managed compute |

| Multimodal Models | Image understanding, document analysis, visual Q&A | Severless API, dedicated endpoints |

| Embedding Models | Semantic search, similarity matching, classification | Serverless API, optimized for batch processing |

| Domain-Specific Models | Healthcare analysis, financial forecasting, legal review | Managed endpoints with compliance controls |

Model Customization Approaches

Base models provide strong starting points but often require customization for enterprise applications. Azure AI Foundry supports three customization levels with different complexity and resource requirements.

Prompt engineering represents the fastest customization path. Teams craft specific instructions that guide model behavior without changing model weights. This approach works well for controlled output formats, specific writing styles, or structured data extraction.

Fine-tuning adapts model behavior using organization-specific training data. Teams provide examples of desired inputs and outputs. The platform retrains model weights to emphasize patterns from your data. Fine-tuning improves accuracy for specialized terminology, industry-specific contexts, or unique workflow requirements.

Model composition combines multiple models into workflows that solve complex problems. An embedding model might identify relevant documents. A language model then generates answers based on retrieved context. A safety model checks output before delivery. Azure AI Foundry orchestrates these multi-step processes through agent frameworks.

Building Intelligent Agents With Foundry Agent Service

With model access established, the Foundry Agent Service enables building applications that reason and act autonomously. Agents combine language models with tools, memory, and decision-making capabilities.

Traditional AI applications follow predetermined paths. User input goes to a model. The model generates output. The application displays results. This pattern works for simple tasks but breaks down with complex workflows requiring multiple steps, tool usage, or contextual decisions.

Agents change this interaction model fundamentally. They analyze tasks, plan approaches, execute steps, and adjust based on results. An agent might search documents, synthesize findings, call APIs to gather additional data, and format final outputs. The agent handles orchestration autonomously.

Agent Architecture Components

Agent systems built on Azure AI Foundry include four core components working together. The reasoning engine analyzes tasks and plans execution strategies. The tool library provides capabilities the agent can invoke. The memory system maintains context across interactions. The safety layer monitors agent actions and outputs.

The reasoning engine typically uses a large language model like GPT-4. This model receives the user request, available tools, and relevant context. It generates a plan breaking the task into steps. Each step might involve calling a tool, analyzing results, or making decisions about next actions.

Tools extend agent capabilities beyond language processing. Search tools query document repositories or databases. API tools call external services to retrieve information or trigger actions. Calculation tools perform mathematical operations or data analysis. The platform provides pre-built tools and supports custom tool development.

Memory systems help agents maintain coherent conversations and workflows. Short-term memory holds the current interaction context. Long-term memory stores user preferences, historical interactions, and learned patterns. The platform manages memory efficiently to balance context retention with cost and latency.

Agent Development Workflow

Building production-ready agents follows a structured development process. Start by defining the agent’s purpose and scope clearly. What problems will it solve? What tools does it need? What level of autonomy is appropriate?

Design the agent’s tool library based on required capabilities. Configure API connections to necessary services. Set up document search against relevant knowledge bases. Define calculation functions for data processing needs. Test each tool independently before agent integration.

Implement safety guardrails appropriate to the agent’s autonomy level. Content filters block inappropriate inputs and outputs. Action validators verify tool calls before execution. Budget limits prevent runaway costs from unexpected behavior. Human-in-the-loop checks add approval steps for sensitive operations.

Test agents thoroughly across realistic scenarios. Include edge cases where agents might make poor decisions. Verify safety controls trigger appropriately. Monitor token usage and latency under load. Iterate on prompts and tool configurations based on test results.

Custom Copilot development extends agent capabilities into Microsoft 365 workflows, enabling seamless integration with daily business applications.

Understanding Azure AI Foundry Architecture

The agent development workflow operates within a broader platform architecture designed for enterprise scale. Understanding this architecture helps teams design solutions that leverage platform capabilities effectively.

Azure AI Foundry organizes resources through a hub-and-spoke model. Hubs provide shared resources and governance controls. Projects within hubs isolate development work and manage specific applications. This structure balances resource efficiency with project independence.

Hub Configuration and Management

Hubs establish the foundation for AI development in your organization. Each hub connects to Azure resources including storage accounts, key vaults, and compute clusters. Teams share these resources across multiple projects, reducing duplication and cost.

Hub-level policies apply to all contained projects automatically. Security controls like network isolation, managed identities, and access controls configure once at the hub. Compliance requirements including data residency, audit logging, and retention policies cascade down to projects.

Resource allocation happens at the hub level. Compute quota distributes across projects based on priorities. Storage limits prevent individual projects from consuming excessive resources. Cost allocation tags track spending by project, team, or business unit.

Project Isolation and Collaboration

Projects within a hub provide isolated workspaces for development teams. Each project maintains its own model deployments, agent configurations, and application code. Projects can share or isolate resources based on collaboration needs.

Development teams work independently within projects without affecting other teams. Model deployments in one project don’t impact deployments elsewhere. Configuration changes apply only to the specific project scope. This isolation enables parallel development across multiple initiatives.

Collaboration features connect projects when needed. Shared model deployments reduce costs for commonly used models. Reusable components like prompt templates or tool libraries propagate across projects. Access controls determine which teams can view or modify shared resources.

| Resource Type | Hub-Level Management | Project-Level Management |

|---|---|---|

| Compute Resources | Quota allocation, instance types, scaling policies | Deployment configuration, workload scheduling |

| Security Controls | Network isolation, key management, identity services | Role assignments, API keys, service principals |

| Model Deployments | Shared deployments, cost allocation | Private deployments, version management |

| Monitoring | Platform health, resource utilization, cost tracking | Application metrics, model performance, user analytics |

Foundry Control Plane Operations

The Foundry Control Plane coordinates operations across hubs and projects. It provides a unified view of platform health, resource usage, and application performance. Operations teams monitor the entire environment through a single interface.

Observability features track key metrics automatically. Model inference latency shows performance trends over time. Token usage reveals cost drivers across applications. Error rates highlight reliability issues requiring attention. The control plane aggregates these metrics from individual deployments.

Governance capabilities enforce organizational policies consistently. Approved model lists restrict which models teams can deploy. Data classification policies control how applications handle sensitive information. Budget alerts notify stakeholders when spending exceeds thresholds.

Implementing Enterprise Security and Governance

With the architecture foundation in place, security and governance controls become critical for production deployment. Enterprise AI applications handle sensitive data and make consequential decisions. Robust controls protect information and ensure responsible AI usage.

Azure AI Foundry security follows defense-in-depth principles. Multiple layers protect against different threat vectors. Network isolation prevents unauthorized access. Identity controls verify user permissions. Encryption protects data at rest and in transit. Audit logs track all platform activities.

Identity and Access Management

Azure Active Directory integration provides centralized identity management. Users authenticate once to access all platform resources. Role-based access control assigns permissions based on job responsibilities. Service principals enable secure application-to-application authentication.

Permission models follow least-privilege principles. Hub administrators manage shared resources and policies. Project contributors develop and deploy applications within assigned projects. Project readers view configurations without modification rights. Custom roles support specialized permission requirements.

Managed identities eliminate credential management for Azure service connections. Applications access storage, databases, and APIs using automatically managed credentials. Azure handles credential rotation and secure distribution. Teams avoid hardcoded secrets in application code.

Data Protection and Privacy

Data protection starts with understanding information sensitivity levels. Azure AI Foundry supports data classification schemes matching organizational policies. Applications tag data based on sensitivity. The platform enforces handling requirements automatically based on classifications.

Encryption protects data throughout its lifecycle. Data at rest encrypts using customer-managed keys in Azure Key Vault. Data in transit encrypts using TLS 1.2 or higher. Processing occurs in encrypted memory when supported by compute infrastructure.

Data residency requirements configure at the hub level. Organizations specify allowed Azure regions for data processing. The platform enforces these restrictions across all projects. Compliance reports verify data stays within approved geographic boundaries.

Azure OpenAI Service provides additional data processing guarantees including no training on customer data and no human review of prompts without explicit consent.

Content Safety and Responsible AI

Content Safety services monitor inputs and outputs for harmful content. Pre-configured filters detect violence, hate speech, sexual content, and self-harm references. Custom filters add organization-specific policies like competitive information or proprietary terminology.

Safety filters operate at multiple points in application workflows. Input filters screen user requests before model processing. Output filters check generated content before delivery. The platform blocks violating content automatically and logs incidents for review.

Responsible AI practices extend beyond content filtering. Model cards document capabilities, limitations, and appropriate use cases for deployed models. Bias evaluations assess model behavior across demographic groups. Transparency notes explain how models make decisions when possible.

Integrating Azure AI Search and Data Grounding

Security and governance controls protect your AI applications. The next challenge involves connecting these applications to enterprise data sources effectively. Azure AI Search provides the retrieval infrastructure for grounding AI responses in factual information.

Large language models generate fluent text but lack specific knowledge about your organization. They can’t access customer records, product specifications, or internal documentation without external data connections. This limitation creates accuracy and relevance problems.

Retrieval-augmented generation solves this knowledge gap. Applications search relevant documents before generating responses. The search results provide factual context to the language model. Generated answers cite specific sources, improving accuracy and verifiability.

Azure AI Search Configuration

Azure AI Search indexes content from multiple data sources into a searchable repository. The service supports structured data from databases, unstructured documents from file shares, and semi-structured content from APIs.

Index configuration determines how content becomes searchable. Text fields enable full-text search across document contents. Vector fields support semantic similarity search using embedding models. Facets enable filtering by categories, dates, or custom attributes.

Search capabilities extend beyond simple keyword matching. Semantic ranking uses language understanding to surface relevant results. Vector search finds conceptually similar content even with different wording. Hybrid approaches combine multiple techniques for optimal relevance.

Implementing RAG Patterns

Retrieval-augmented generation implementation follows a consistent pattern across applications. User queries first convert to search queries. The search service returns relevant documents. The application combines query and documents into a prompt. The language model generates a response grounded in retrieved information.

Foundry IQ extends basic RAG with additional capabilities. Query understanding identifies user intent and extracts key entities. Result ranking considers document freshness, authority, and relevance. Citation extraction links generated text back to source documents automatically.

Performance optimization balances retrieval depth with response latency. Shallow searches check fewer documents but return faster. Deep searches examine more content but increase response time. Applications tune this tradeoff based on accuracy requirements and user expectations.

Azure Machine Learning complements search capabilities with predictive models that identify relevant content proactively based on usage patterns.

Development Tools and SDK Support

Data grounding infrastructure connects your applications to knowledge sources. Development tools and SDKs transform this infrastructure into working applications. Azure AI Foundry supports multiple programming languages and development environments.

SDK availability spans the most common enterprise development languages. Python SDKs provide the most comprehensive feature coverage. C# SDKs integrate naturally with .NET applications and Azure Functions. JavaScript/TypeScript SDKs enable web and Node.js development. Java SDKs support enterprise applications on the JVM.

Python Development Workflow

Python remains the primary language for AI development on Azure AI Foundry. The Azure AI SDK for Python provides high-level abstractions over platform capabilities. Developers work with familiar concepts like clients, resources, and operations.

Installation happens through standard package managers. The pip package includes dependencies and configures authentication automatically. Virtual environments isolate project dependencies from system packages. Configuration files store connection strings and credentials securely.

Code patterns follow consistent structures across capabilities. Client objects represent connections to platform services. Resource objects model entities like projects, deployments, and datasets. Operation objects execute actions like model training or agent deployment.

Visual Studio Code Integration

Visual Studio Code extensions provide integrated development experiences. The Azure AI extension enables project creation, resource management, and deployment directly from the editor. IntelliSense offers code completion for SDK methods and parameters.

Debugging capabilities support local testing before cloud deployment. Breakpoints pause execution to inspect variables and application state. Log streaming displays platform messages in real-time. Local compute emulators run applications without cloud resources during development.

Deployment automation streamlines the path from development to production. GitHub Actions integration enables continuous deployment from source control. Azure Pipelines supports complex release workflows with approval gates and environment promotion. Both integrate with Azure AI Foundry for model registration and endpoint updates.

API and REST Interfaces

REST APIs provide language-agnostic access to platform capabilities. Applications make HTTP requests to standard endpoints. Authentication uses Azure AD tokens or API keys. Responses return JSON for easy parsing across platforms.

API coverage includes all major platform operations. Management APIs create and configure resources. Inference APIs call deployed models and agents. Monitoring APIs retrieve metrics and logs. The complete API surface enables custom tooling and automation.

OpenAPI specifications document all available endpoints. Interactive documentation enables testing APIs directly from web browsers. Client generation tools create SDK-like experiences in languages without official support.

Deployment Options and Scaling Strategies

Development tools enable application creation. Deployment options determine how applications run in production. Azure AI Foundry provides multiple deployment patterns optimized for different scenarios.

Serverless deployments offer the simplest operational model. The platform manages all infrastructure automatically. Applications scale from zero to handle traffic spikes. Billing charges only for actual usage based on requests and compute time.

Managed Compute Deployments

Managed compute provides dedicated resources for predictable workloads. Organizations provision specific instance types and quantities. The platform handles updates, monitoring, and high availability automatically. Costs remain fixed regardless of traffic volume.

Instance selection balances performance requirements with budget constraints. CPU instances work well for smaller models and lower throughput needs. GPU instances accelerate large model inference and training workloads. Specialized instances optimize specific model architectures or data types.

Scaling configurations determine how deployments respond to load changes. Manual scaling maintains fixed capacity set by administrators. Scheduled scaling adjusts capacity based on time patterns like business hours. Autoscaling adds or removes instances based on metrics like request rate or CPU utilization.

Multi-Region Deployment Patterns

Multi-region deployments improve availability and reduce latency for global users. Applications deploy to multiple Azure regions simultaneously. Traffic routes to the nearest healthy region automatically. Failures in one region don’t affect users in other regions.

Configuration complexity increases with multi-region architectures. Model deployments replicate across regions requiring synchronization. Data stores need replication strategies balancing consistency and performance. Monitoring must track health across all regions independently.

Cost considerations influence multi-region decisions significantly. Each region adds deployment and data transfer costs. Organizations balance these costs against improved user experience and availability requirements. Selective regional deployment focuses investments on key markets.

| Deployment Type | Best Use Cases | Scaling Approach |

|---|---|---|

| Serverless | Variable workloads, development environments, cost optimization | Automatic scaling from zero to peak demand |

| Managed Compute | Predictable workloads, performance requirements, budget certainty | Manual, scheduled, or metric-based autoscaling |

| Multi-Region | Global applications, high availability, low latency requirements | Regional capacity planning with cross-region failover |

Monitoring Performance and Optimizing Costs

Production deployments require ongoing monitoring and optimization. Azure AI Foundry provides observability tools that surface performance trends and cost drivers. Teams use these insights to maintain application health and control spending.

Performance monitoring tracks metrics across multiple dimensions. Request latency measures end-to-end response times from user perspective. Token usage reveals processing costs for each interaction. Error rates highlight reliability issues requiring investigation. The platform collects these metrics automatically from all deployments.

Observability Dashboard Configuration

Foundry Control Plane dashboards aggregate metrics from individual projects. Operations teams view platform-wide trends identifying systemic issues. Project-specific views focus on individual application performance. Custom dashboards highlight metrics most relevant to specific roles or responsibilities.

Alert configuration enables proactive issue detection. Threshold-based alerts trigger when metrics exceed defined limits. Anomaly detection identifies unusual patterns in baseline metrics. Alert actions notify teams through email, SMS, or incident management systems automatically.

Log analytics provides detailed investigation capabilities. Application logs capture custom events and diagnostic information. Platform logs record resource changes and access patterns. Query languages enable filtering, aggregation, and correlation across log sources.

Cost Management Strategies

Cost optimization starts with understanding spending patterns. Azure AI Foundry breaks costs into model inference, compute resources, storage, and data transfer. The platform attributes costs to specific projects, teams, or applications for chargeback purposes.

Token usage represents the largest variable cost for most applications. Shorter prompts reduce processing costs per request. Efficient prompt engineering achieves goals with fewer tokens. Caching frequently used completions eliminates redundant processing.

Compute optimization depends on deployment type. Serverless deployments scale to zero during idle periods automatically. Managed compute requires right-sizing instances to workload needs. Autoscaling prevents over-provisioning while maintaining performance.

Total economic impact analysis helps quantify the value AI applications deliver relative to platform costs.

Real-World Use Cases Across Industries

Platform capabilities enable specific business outcomes across industries. Understanding practical applications helps teams identify opportunities within their organizations.

Intelligent applications built on Azure AI Foundry deliver measurable value through automation, enhanced decision-making, and improved customer experiences.

Customer Service Automation

Customer service teams use AI agents to handle routine inquiries automatically. Agents access knowledge bases to answer product questions. They retrieve order status from backend systems. They escalate complex issues to human agents with full context.

Implementation combines multiple Azure AI Foundry capabilities. Azure AI Search indexes support documentation and product catalogs. Language models generate natural responses based on retrieved information. Agent frameworks orchestrate multi-turn conversations maintaining context across interactions.

Results include reduced response times and improved customer satisfaction. Agents handle high volumes during peak periods without wait times. Human agents focus on complex situations requiring judgment and empathy. Organizations reduce support costs while maintaining service quality.

Document Intelligence and Analysis

Enterprise organizations process massive document volumes across contracts, reports, and correspondence. AI applications extract key information, classify documents, and route for appropriate handling automatically.

Document processing workflows start with Azure AI Vision for optical character recognition. Text extraction converts scanned documents to searchable content. Form recognition identifies document types and extracts structured data from standard formats.

Language models analyze extracted text for insights. They summarize lengthy documents highlighting key points. They identify risks or compliance issues requiring review. They generate metadata enabling better search and retrieval later.

Industry-specific applications like construction bid analysis demonstrate how general capabilities adapt to specialized domains.

Predictive Analytics and Forecasting

Business planning benefits from AI-powered forecasting models. Organizations predict demand, identify trends, and optimize resource allocation using historical data patterns.

Time series models built with Azure Machine Learning integrate with Foundry applications. Models train on historical data incorporating seasonal patterns and known events. Deployments provide predictions through standard APIs. Applications incorporate forecasts into dashboards, reports, and automated decisions.

Natural language interfaces make predictions accessible to business users. Teams ask questions about future trends in plain language. AI agents query forecasting models and explain results in business terms. This accessibility increases adoption and trust in analytical insights.

Moving From Pilots to Production Scale

Successful pilot projects validate AI potential. Production deployment requires additional planning and operational maturity. Organizations must address governance, change management, and ongoing optimization systematically.

Production readiness assessment evaluates multiple dimensions. Technical readiness covers performance, reliability, and security. Operational readiness ensures teams can monitor and maintain applications. Organizational readiness confirms users understand capabilities and limitations.

Governance Framework Implementation

AI governance establishes policies and processes for responsible AI usage. Governance frameworks define approved use cases, model selection criteria, and deployment requirements. They specify review processes for new applications and ongoing monitoring obligations.

Model approval processes verify models meet organizational standards before deployment. Technical reviews assess performance, bias, and safety. Business reviews confirm alignment with strategic objectives. Legal reviews ensure compliance with regulations and contractual obligations.

Ongoing monitoring requirements extend beyond technical metrics. Usage audits verify applications operate within approved scope. Feedback loops capture user concerns and improvement opportunities. Regular reviews assess whether deployed applications continue meeting governance standards.

Change Management for AI Adoption

Technology deployment succeeds only with user adoption. Change management programs prepare employees for AI-augmented workflows. They address concerns, provide training, and celebrate successes building momentum.

Communication strategies explain what AI applications do and how they help. Transparent messaging about capabilities and limitations builds appropriate expectations. Regular updates keep stakeholders informed about progress and plans.

Training programs teach users how to work effectively with AI tools. Hands-on sessions demonstrate features and best practices. Job aids provide quick references for common tasks. Support channels help users troubleshoot issues and ask questions.

Success measurement tracks adoption metrics and business outcomes. Active user counts show engagement levels. Task completion rates reveal usability. Business metrics like productivity gains or cost reductions demonstrate value delivery.

Building Your Azure AI Foundry Roadmap

The path from platform evaluation to production deployment requires strategic planning. Successful organizations chart deliberate roadmaps that build capabilities progressively while delivering incremental value.

Your roadmap should align AI investments with business priorities. Identify high-impact use cases where AI addresses critical challenges or opportunities. Assess organizational readiness including data quality, technical skills, and change capacity. Sequence initiatives to build foundational capabilities before complex applications.

Start with focused pilots that validate core capabilities. Choose use cases with clear success metrics and manageable scope. Build foundational elements like data pipelines, security controls, and monitoring frameworks. These investments support multiple applications over time.

Azure AI Foundry provides the platform foundation for this development path. The unified environment reduces integration complexity. Enterprise controls support compliant deployment at scale. The breadth of available models and tools accelerates application development.

Organizations partner with experienced implementation teams to accelerate progress. Digital consulting partners bring proven methodologies and lessons from multiple deployments. They help avoid common pitfalls and optimize platform configurations. They transfer knowledge to internal teams building long-term capability.

Your AI development efforts benefit from strategic planning that balances ambition with pragmatism. Build with purpose, creating integrated capabilities rather than disconnected pilots. Focus on production-ready implementations that deliver measurable business value. Invest in operational excellence ensuring applications remain reliable and cost-effective over time.

The unified platform approach makes this development path more achievable. You spend less time on infrastructure and integration. Your teams focus on solving business problems through AI applications. You build sustainable competitive advantages through digital maturity rather than quick tactical wins.